Datasets in Statistics and Cybersecurity

Datasets are fundamental for analyzing and modeling behaviors in cybersecurity. They form the basis for detecting anomalies, training predictive models, and evaluating intrusion detection systems [1].

Types of Datasets

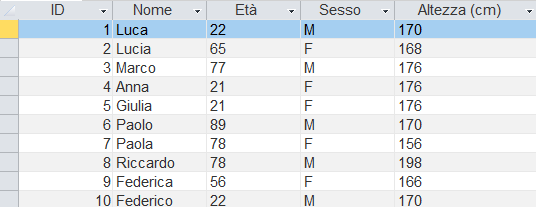

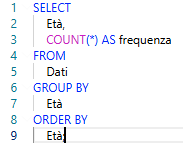

1. Structured

Data organized in tables with rows and columns, such as relational databases.

Example: access logs, user tables [2].

2. Unstructured

Data without a fixed schema, such as text, images, or video. They require advanced processing techniques, like NLP or computer vision [3].

3. Semi-Structured

Data with partial structure, such as JSON or XML files. They contain labels or metadata that facilitate analysis [2].

Example: Tabular Dataset from KDD Cup '99

The KDD Cup '99 dataset is widely used for intrusion detection. It contains simulated network traffic information with 41 variables and various attack labels [6].

Here is a simplified tabular representation:

| Duration | Protocol | Service | SrcBytes | DstBytes | Label |

|---|---|---|---|---|---|

| 0 | tcp | http | 181 | 5450 | normal |

| 0 | udp | domain | 105 | 146 | normal |

| 0 | tcp | ftp | 239 | 486 | attack |

This structure allows the application of statistical and machine learning techniques to identify anomalous behaviors.

Dataset Management

Proper dataset management is crucial for reliable and replicable results. Main steps include:

- Data cleaning: removing missing, duplicate, or erroneous values.

- Normalization and standardization: aligning variable scales to avoid bias in models [4].

- Anonymization and privacy: protecting sensitive data, essential in cybersecurity [5].li>

- Feature selection: choosing the most relevant features to improve model performance and reduce computational complexity [2].

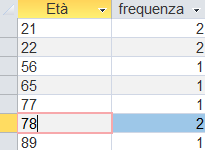

Data Distribution Concepts

Data distribution describes how values of a variable or set of variables are spread. Understanding distribution is essential to:

- Choose appropriate statistical tests

- Identify outliers and anomalies

- Improve predictive modeling [4]

Types of Distribution

- Univariate — examines one variable at a time, analyzing mean, median, mode, variance, skewness, and kurtosis [4].

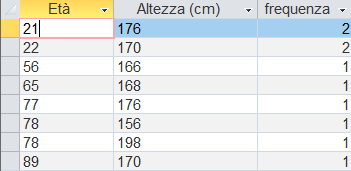

- Bivariate — examines the relationship between two variables, using scatter plots, contingency tables, and correlations [4].

- Multivariate — involves multiple variables simultaneously, used in PCA, clustering, and complex predictive models [4].

Other Relevant Concepts

- Probability distributions: Normal, Poisson, Binomial — useful for modeling events like access attempts or attacks [4].

- Outliers: extreme values that may indicate anomalies or intrusions [1][5].

- Patterns and correlations: identifying dependencies between variables can reveal abnormal behaviors or vulnerabilities [2][4].

Mathematical Formulas for Distributions

Measures of location and dispersion

- Population mean: \[ \mu = \frac{1}{N}\sum_{i=1}^N x_i \]

- Sample mean: \[ \bar{x} = \frac{1}{n}\sum_{i=1}^n x_i \]

- Population variance: \[ \sigma^2 = \frac{1}{N}\sum_{i=1}^N (x_i-\mu)^2 \]

- Sample variance (unbiased): \[ s^2 = \frac{1}{n-1}\sum_{i=1}^n (x_i-\bar{x})^2 \]

- Standard deviation: \[ \sigma=\sqrt{\sigma^2},\qquad s=\sqrt{s^2} \]

Common probability distributions

- Normal distribution (pdf): \[ f(x)=\frac{1}{\sigma\sqrt{2\pi}}\exp\!\left(-\frac{(x-\mu)^2}{2\sigma^2}\right) \]

- Binomial distribution (pmf): \[ P(X=k)=\binom{n}{k} p^k (1-p)^{\,n-k},\qquad k=0,\dots,n \]

- Poisson distribution (pmf): \[ P(X=k)=\frac{\lambda^k e^{-\lambda}}{k!},\qquad k=0,1,2,\dots \]

Moments and shape

- Skewness (sample): \[ \text{skew}=\frac{\frac{1}{n}\sum_{i}(x_i-\bar{x})^3}{\left(\frac{1}{n}\sum_{i}(x_i-\bar{x})^2\right)^{3/2}} \]

- Excess kurtosis (sample): \[ \text{kurtosis}=\frac{\frac{1}{n}\sum_{i}(x_i-\bar{x})^4}{\left(\frac{1}{n}\sum_{i}(x_i-\bar{x})^2\right)^{2}} - 3 \]

Chi-squared statistic

Used to compare observed vs expected frequencies (also used in your scoring implementation):

\[ \chi^2=\sum_{i}\frac{(O_i-E_i)^2}{E_i}, \]where \(O_i\) are observed counts and \(E_i\) are expected counts.

Final Considerations

Proper analysis of datasets and distributions is essential in cybersecurity for identifying threats and optimizing defense systems. The combined use of structured, unstructured, and semi-structured datasets, along with rigorous data management, allows the development of robust and reliable statistical and machine learning models [1][6].

References

- Provost, F., & Fawcett, T. (2013). Data Science for Business. O'Reilly Media.

- Han, J., Pei, J., & Kamber, M. (2011). Data Mining: Concepts and Techniques. Elsevier.

- Jurafsky, D., & Martin, J. H. (2020). Speech and Language Processing. Pearson.

- Montgomery, D. C., & Runger, G. C. (2018). Applied Statistics and Probability for Engineers. Wiley.

- Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep Learning. MIT Press.

- Tavallaee, M., et al. (2009). A detailed analysis of the KDD CUP 99 data set. IEEE Symposium on Computational Intelligence for Security and Defense Applications.